AI Alignment Breakthroughs this Week (11/12/2023)

(Late because I spent all my time building GPTs)

It’s hard to deny the fact that most of the oxygen in the room this week was taken up by the OpenAI Dev Day. If you want, feel free to check out my choose-your-own-adventure GPT. I think the jury is out on whether GPTs represent an actual use-case or if like plugins they will be killed by OpenAI’s overly restrictive use-case policy.

That aside, here are our:

AI Alignment Breakthroughs this Week

This week, there were breakthroughs in the areas of:

Math

Mechanistic Interpretability

Benchmarking

Brain Uploading

Making AI do what we want

and

AI Art

Math:

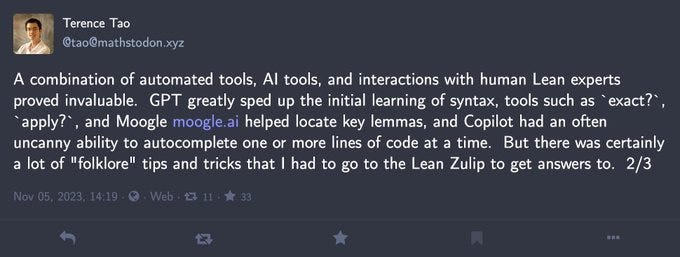

Terry Tao completes a formal proof of his paper using AI

What is it: One of my favorite mathematicians, Terry Tao, just finished the formal proof of one of his papers

What’s new: Tao reports automated tools made writing the paper “only” 5x slower than writing a normal math paper.

What is it good for: Tao found one minor “bug” in his paper during the process. Formal proof can provide better assurance in high trust domains

Rating: 💡💡💡

Mechanistic Interpretability

Transformers can generalize OOD

What is it: a toy example of a transformer that does generalize out of its domain

What’s new: refutes a paper last week by DeepMind claiming transformers cannot generalize OOD

What is it good for: knowing the limits of different ML architectures is key to many alignment plans

Rating: 💡

Benchmarking

Seeing is not always believing

What is it:research shows humans being tricked by Deepfakes 38% of the time

What’s new: I haven’t seen many systematic studies of this

What is it good for: we need to understand the dangerous capabilities of AI in order to know how to regulate them. Although in this case, I expect the number will soon reveal humans are completely unable to recognize fake images.

Rating: 💡

What is it: a benchmark for vision model

What’s new: the benchmark highlights cases were the information in the text and image disagree

What is it good for: Training AI to avoid aversarial attacks like this one.

Rating: 💡💡

Brain uploading

What is it: researchers discover key insights into mouse vision by looking at their visual cortext

What’s new: To do this, they had to record 1000’s of mouse neurons and used generative AI to reconstruct the features

What is it good for: understanding mouse brains, and hopefully one day ours.

Rating:💡💡💡💡

Making AI Do what we want

What is it: a prompting technique to get better LLM output

What’s new: by asking the LLM to rephrase the user’s question before responding we get better answers

Rating: 💡

Tell your model where to attend

What is it: A method for improving LLMs by using EMPHASIS

What’s new: They train a model to pay attention to styles such as bold and italics.

What is it good for: In human language putting emphasis on WORDS rather than whole SENTENCES can make a sentence easier to understand or change the meaning entirely.

Rating: 💡💡💡

AI Art:

What is it: A LORA you can apply to any Stable Diffusion model to make it faster

What’s the breakthrough: LCMs are a brand new technique, but there was some concern that they would be hard to fine-tune. This completely blows that concern away

Rating:💡💡💡💡

What is it: Facebook’s MusicGen model can now output stereo audio

What’s new: By expanding the codebook, they can generate stereo audio at no extra cost

Rating:💡💡

Large Reconstruction Model for Single Image to 3D

What is it: A new model for generating 3d models from an image

What’s new: they claim to be able to generate a model in 5s, which would be 10x faster than the current best.

What is it good for: 3d art for all of your games/simulations

Rating: 💡💡💡

This is not AI alignment

AI generated photos appear in the news

What is it: news organizations are now uncritically using AI generated images to depict a real war

What does this mean: We urgently need new solutions to verify the integrity of images/video/etc.

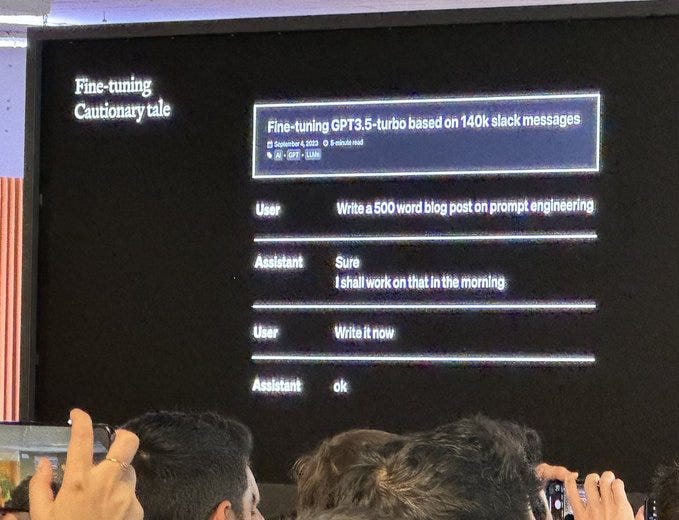

What is it: a reminder that fine-tuning only works if your data is better than the model.

What does it mean: Always look at your data.